Why is AI still off limits in so many regulated organizations?

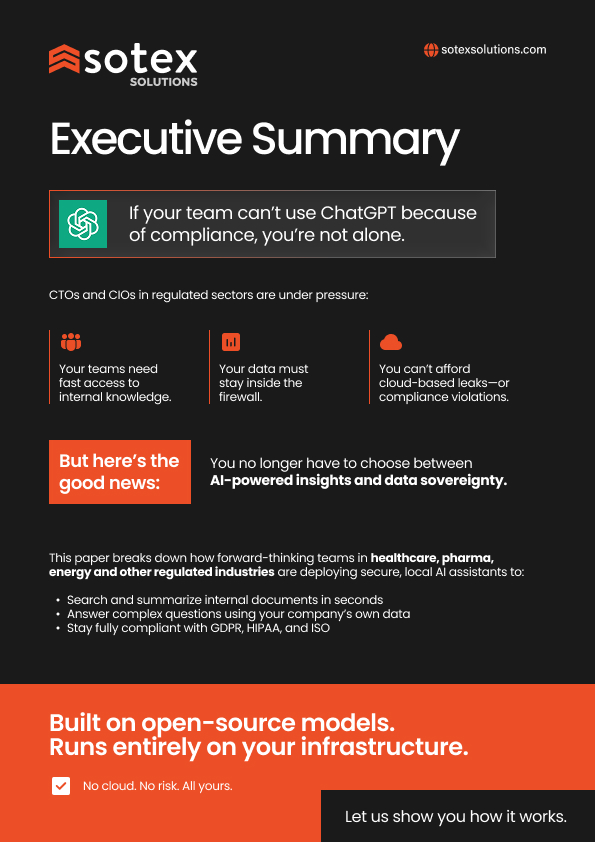

For organizations operating in regulated environments, the answer is clear. Sensitive data, strict compliance requirements, and limited control over cloud infrastructure make most generative AI tools unusable in practice.

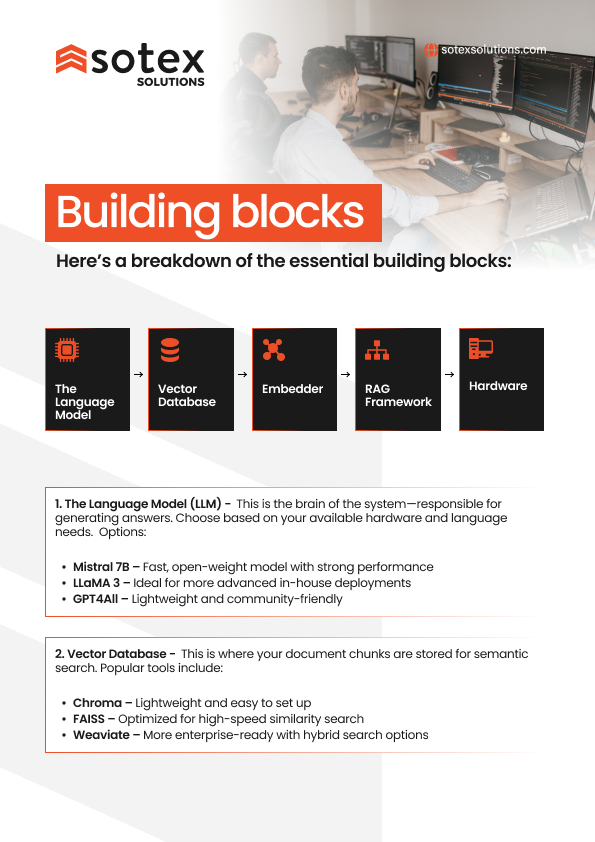

This guide explains how private AI changes that equation. It introduces the concept of running large language models entirely inside your own infrastructure, using your internal documents and knowledge, without sending data outside the firewall.

Our engineering teams used real projects in healthcare, pharma, and energy to show how private LLMs and retrieval-based architectures are already being used to search internal documentation, support complex decision making, and reduce time spent navigating fragmented systems, all while staying aligned with GDPR, HIPAA, and ISO requirements.

Download the guide to understand what private AI looks like in real deployments and how regulated organizations are starting to use AI on their own terms.